Max Zimmer

Postdoctoral Researcher at the Zuse Institute Berlin

PhD in Mathematics, TU Berlin (2026)

Research

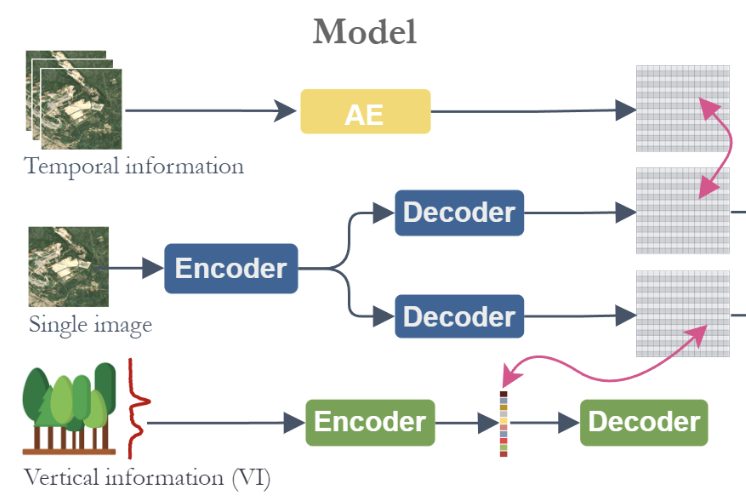

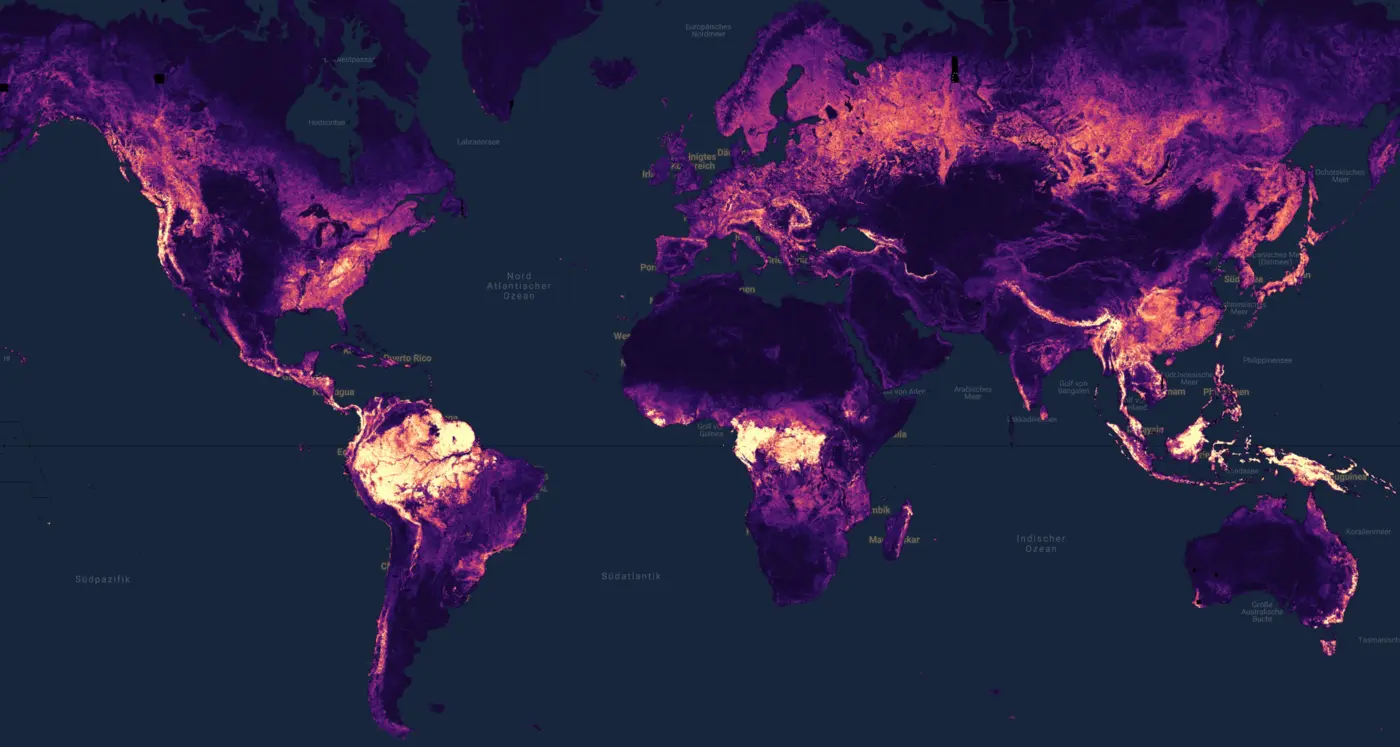

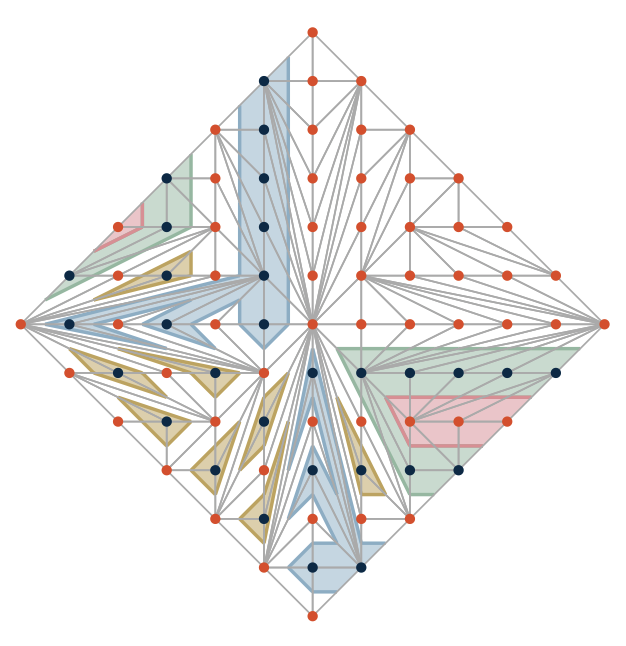

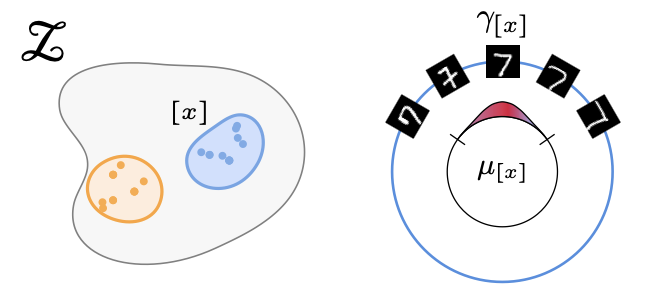

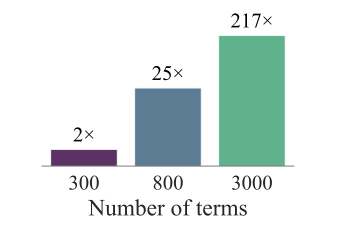

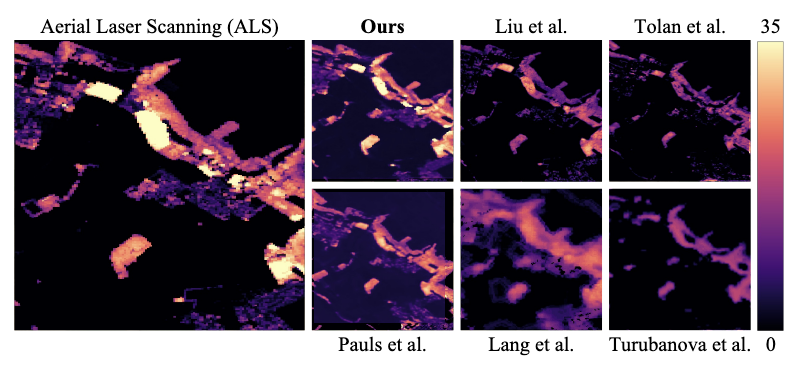

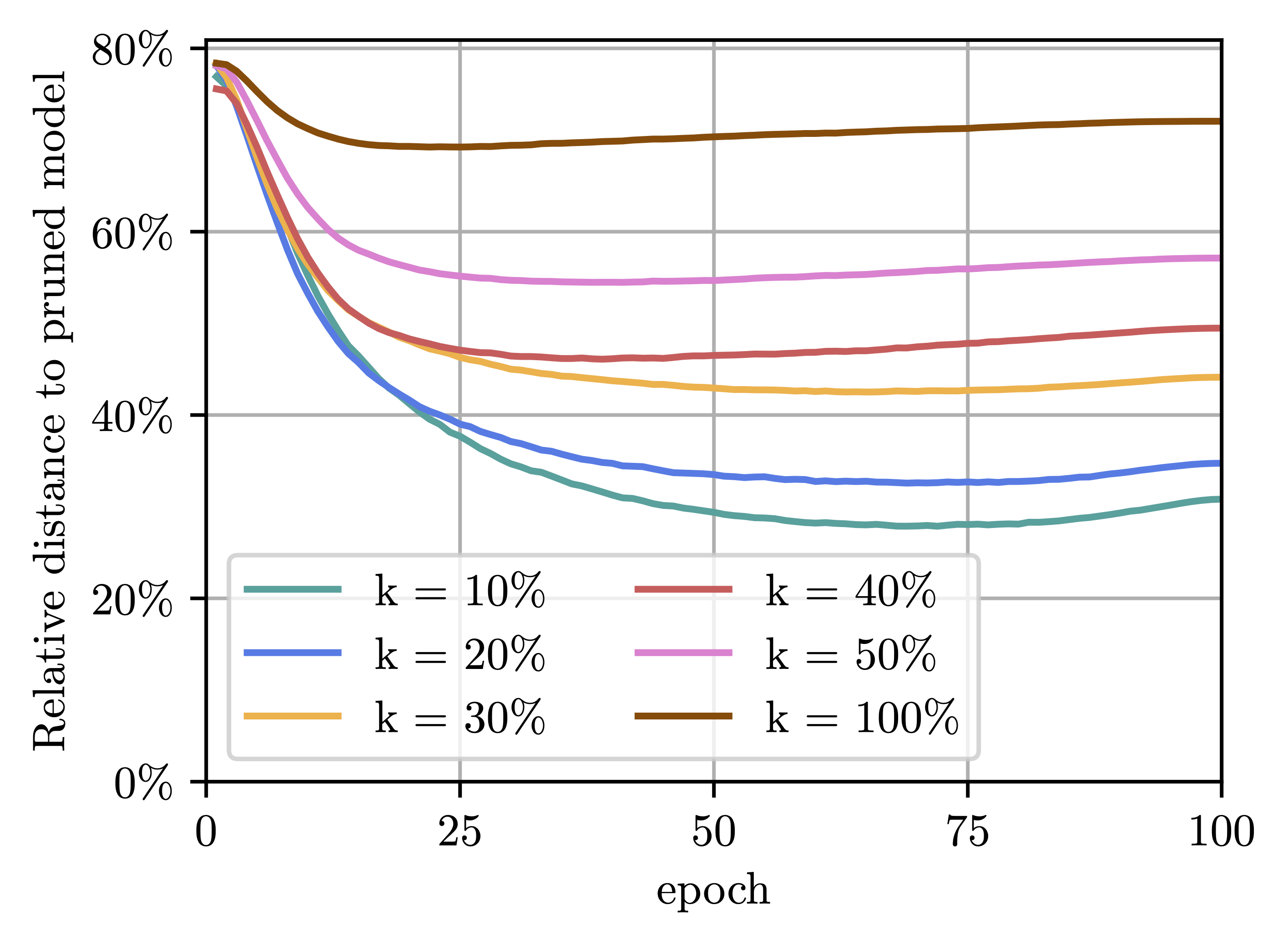

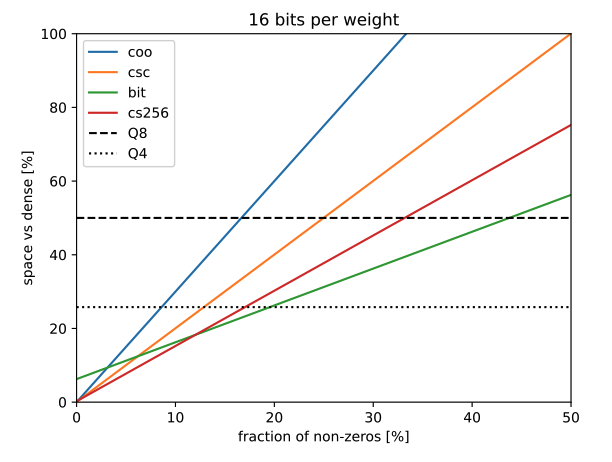

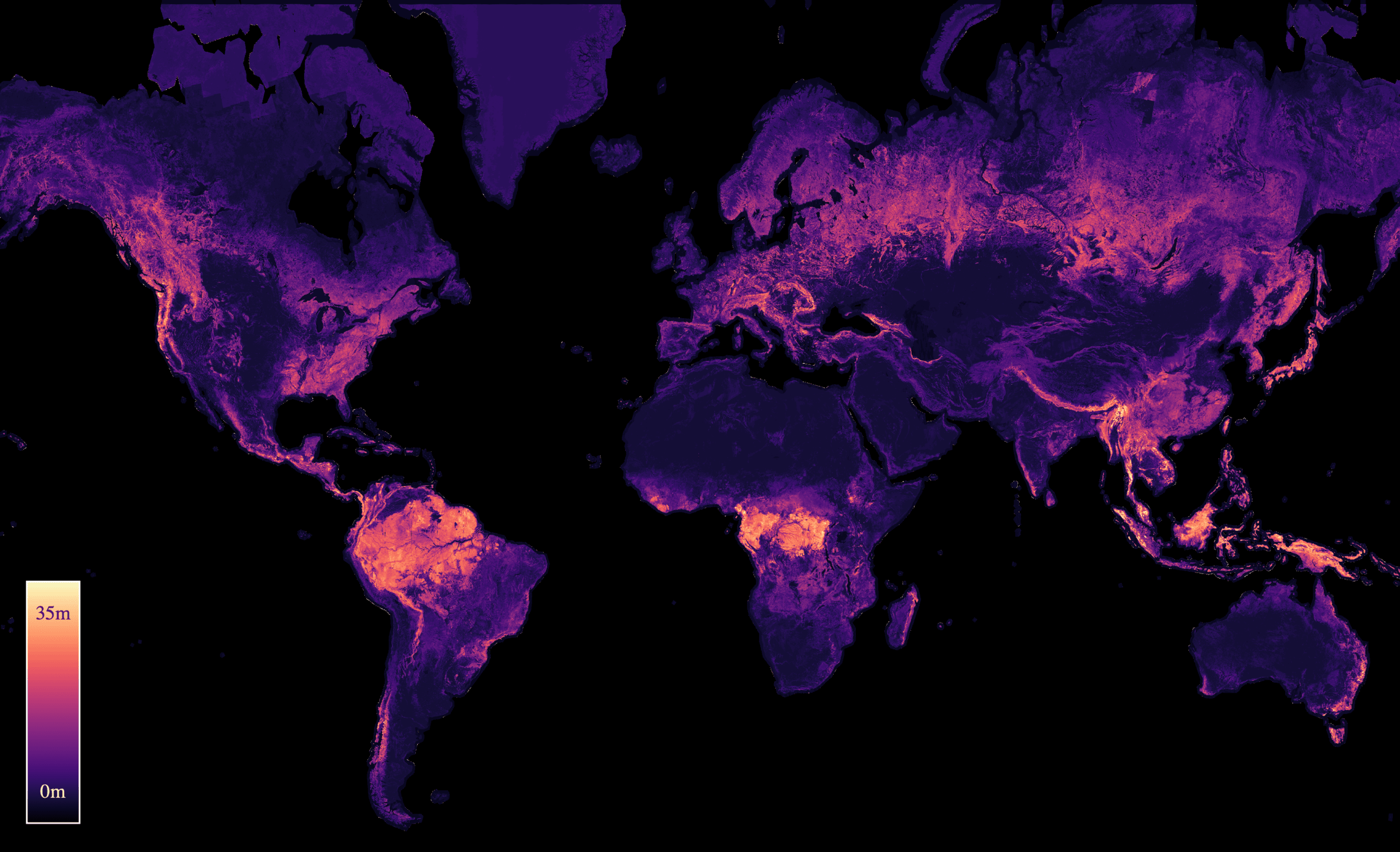

My research focuses on the Efficiency of neural networks — from weight sparsity and quantization to KV cache compression and speculative decoding — on AI4Math, and on Agentic AI. I also work on Optimization, applying Deep Learning to sustainability challenges (e.g., forest monitoring), and more recently the post-training paradigm. At ZIB, I lead the iol.LEARN Deep Learning group. I received my PhD in Mathematics from TU Berlin in 2026, advised by Prof. Dr. Sebastian Pokutta. Please take a look at my list of publications and feel free to reach out for questions or potential collaborations! You can find TLDRs of some of my papers on my blog and a collection of interesting links and AI news here.

Previously

I studied Mathematics at TU Berlin (BSc 2017, MSc 2021), with a semester abroad at the Università di Bologna. During my studies, I worked on combinatorial optimization with Leon Sering at the COGA Group and interned in the research groups of Prof. Sergio de Rosa at Università degli Studi di Napoli Federico II and Prof. Marco Mondelli at IST Austria. I joined the IOL Lab in 2020 as a student researcher, became a doctoral researcher in 2021, and have led the Deep Learning research group since 2024. I received my PhD in 2026. I am also a member of the Berlin Mathematical School and the MATH+ Cluster of Excellence. You can find my full CV here.

latest news view all →

latest publications view all →

-

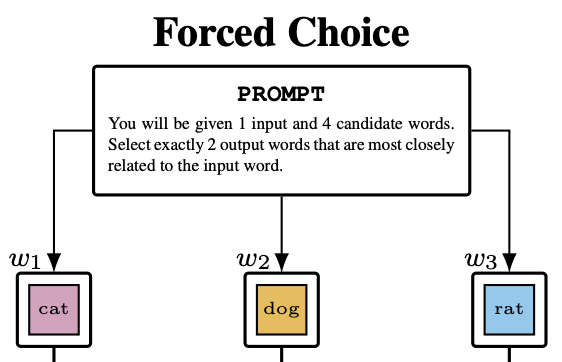

PreprintarXiv preprint arXiv:2606.08204 2026

PreprintarXiv preprint arXiv:2606.08204 2026 -

-

ICML26 Forty-third International Conference on Machine Learning 2026

ICML26 Forty-third International Conference on Machine Learning 2026 -

ICML26 Forty-third International Conference on Machine Learning 2026

ICML26 Forty-third International Conference on Machine Learning 2026 -

ICMS26 Mathematical Software - ICMS 2026 - 9th International Conference, Waterloo, Canada, July 20-23, 2026, Proceedings 2026

ICMS26 Mathematical Software - ICMS 2026 - 9th International Conference, Waterloo, Canada, July 20-23, 2026, Proceedings 2026 -

Workshop ICML 2026 Workshop: AI as a Tool for Mathematics, Computer Science, and Machine Learning 2026

Workshop ICML 2026 Workshop: AI as a Tool for Mathematics, Computer Science, and Machine Learning 2026 -

PreprintarXiv preprint arXiv:2602.21421 2026

PreprintarXiv preprint arXiv:2602.21421 2026 -

-

ICLR26 The Fourteenth International Conference on Learning Representations 2026

ICLR26 The Fourteenth International Conference on Learning Representations 2026 -

ICLR26 The Fourteenth International Conference on Learning Representations 2026

ICLR26 The Fourteenth International Conference on Learning Representations 2026 -

PreprintarXiv preprint arXiv:2512.10922 2025

PreprintarXiv preprint arXiv:2512.10922 2025 -

PreprintarXiv preprint arXiv:2512.10507 2025

PreprintarXiv preprint arXiv:2512.10507 2025 -

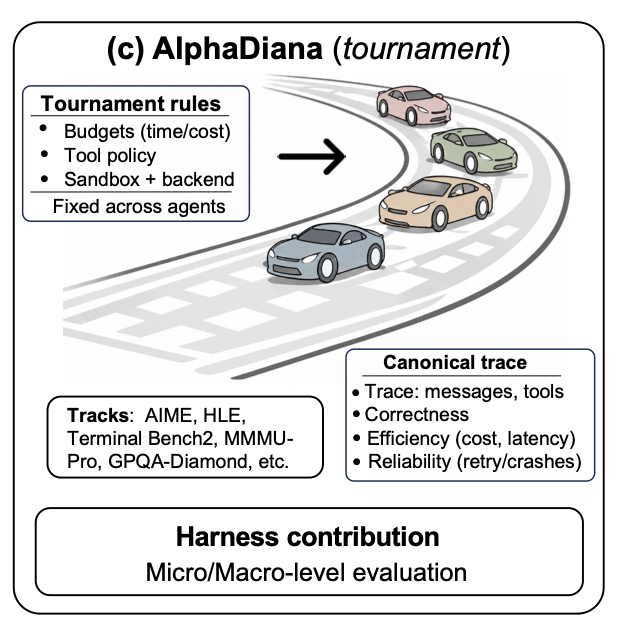

PreprintarXiv preprint arXiv:2510.14444 2025

PreprintarXiv preprint arXiv:2510.14444 2025 -

* equal contributionPreprintarXiv preprint arXiv:2510.13713 2025

* equal contributionPreprintarXiv preprint arXiv:2510.13713 2025 -

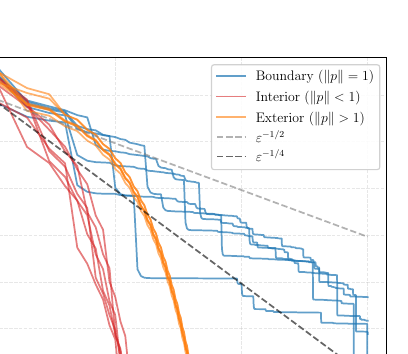

* equal contributionICML25 Forty-second International Conference on Machine Learning 2025

* equal contributionICML25 Forty-second International Conference on Machine Learning 2025 -

Journal Mathematical Optimization for Machine Learning 2025

Journal Mathematical Optimization for Machine Learning 2025 -

Workshop ICLR25 Workshop on Sparsity in LLMs (SLLM) 2025

Workshop ICLR25 Workshop on Sparsity in LLMs (SLLM) 2025 -

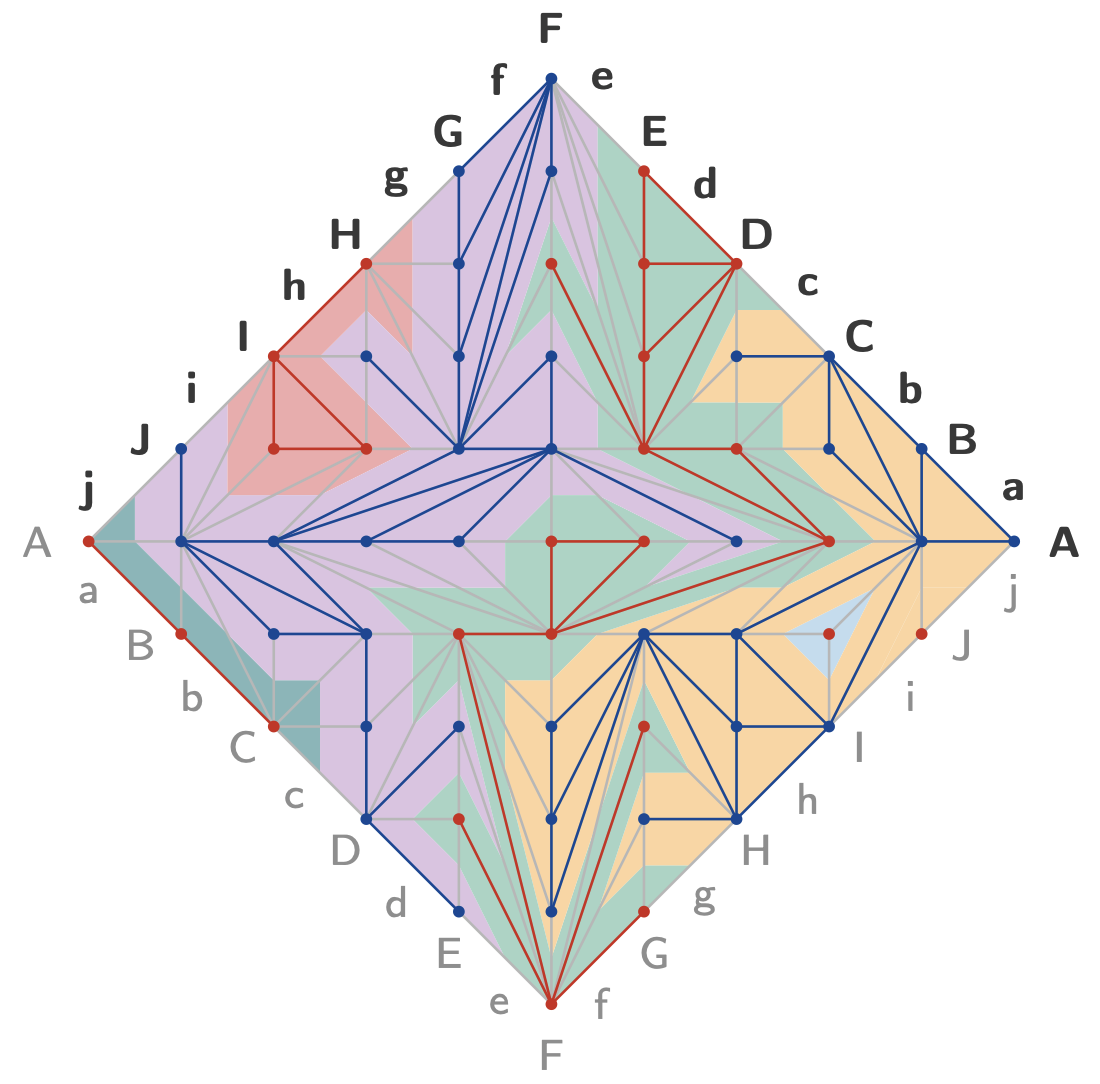

ICML24 Forty-first International Conference on Machine Learning 2024

ICML24 Forty-first International Conference on Machine Learning 2024 -

-

Workshop ICLR24 Workshop on AI4DifferentialEquations In Science 2024

Workshop ICLR24 Workshop on AI4DifferentialEquations In Science 2024 -

PreprintarXiv preprint arXiv:2312.15230 2023

PreprintarXiv preprint arXiv:2312.15230 2023